CREECH AIR FORCE BASE, NV – AUGUST 08: Mechanics trail an MQ-9 Reaper as it taxis for takeoff August 8, 2007 at Creech Air Force Base in Indian Springs, Nevada. The Reaper is the Air Force’s first “hunter-killer” unmanned aerial vehicle (UAV), designed to engage time-sensitive targets on the battlefield as well as provide intelligence and surveillance. The jet-fighter sized Reapers are 36 feet long with 66-foot wingspans and can fly for up to 14 hours fully loaded with laser-guided bombs and air-to-ground missiles. They can fly twice as fast and high as the smaller MQ-1 Predators, reaching speeds of 300 mph at an altitude of up to 50,000 feet. The aircraft are flown by a pilot and a sensor operator from ground control stations. The Reapers are expected to be used in combat operations by the U.S. military in Afghanistan and Iraq within the next year. (Photo by Ethan Miller/Getty Images)

The Great A.I. Awakening: A Conversation with Eric Schmidt Feb 23, 2017

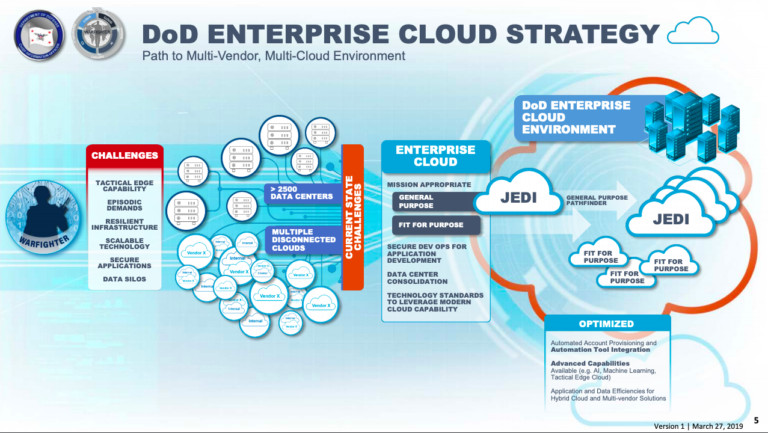

as user might have noticed, the battle Amazon vs Microsoft who will get the $10Billion DoD cloud computing contract is still hot.

And maybe the contract is split.

But what is the JEDI cloud used/useful for?

And why did Google employees protest this project in a hefty way so Google completely opted out of the contract race. (also Trump seems to favor Microsoft for whatever reason)

one does not have all the answers… but it is likely they are aiming in this direction:

https://breakingdefense.com/tag/jedi/

the battlefield of the future will swarm with interconnected “devices”, sensors, robots… and those sensors and robots need to be interconnected and also connected to human soldiers.

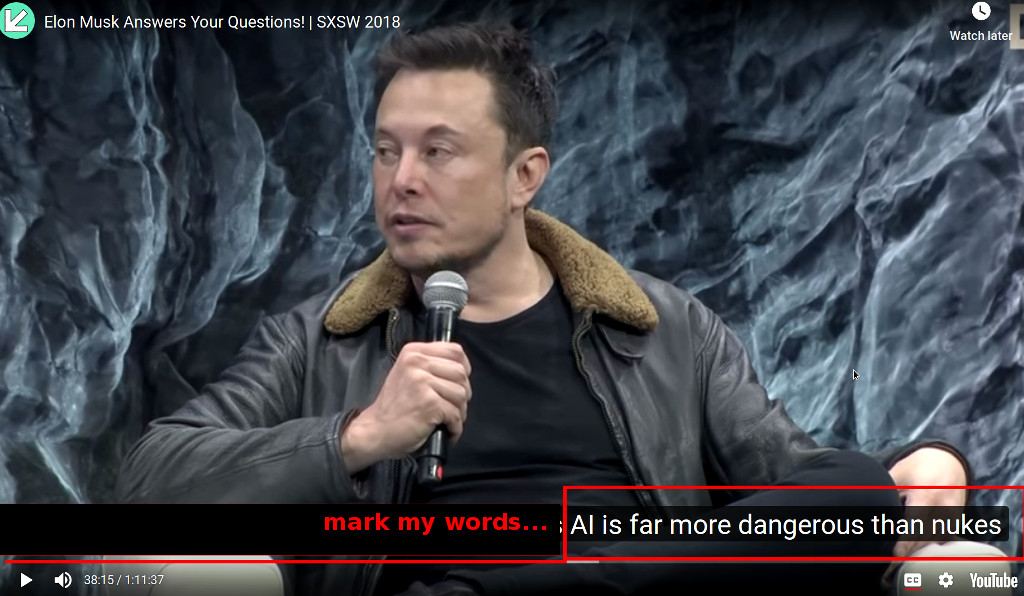

the user might have noticed Elon Musk saying:

this means: Elon Musk believes that AI could become so advanced and out of control that mankind might face a “Terminator 2” or “The Matrix” like scenario, where mankind’s own creation got “out of hand” and then has to fight against an AI that is many many times more intelligent than a human or even a team of humans – making winning such a battle pretty impossible.

But this does not prevent the military from researching and maybe even one day fielding such autonomous weapons.

The military of several nations around the globe are researching autonomous weapons system = AI enhanced robots / drones.

“go robot kill everyone in this or that area”

The question is: can those autonomous weapons distinguish between combatant and civilian?

probably not.

They simply detect a moving heat signature, try to determine if it’s an animal or a human and will fire few rounds until the heat signature stops moving ( = is dead) and then move on to the next target.

“The Defense Department has always tested and evaluated their systems to make sure that they perform reliably as intended.

But the Board warns that AI weapon systems can be “non-deterministic, nonlinear, high-dimensional, probabilistic, and continually learning.”

When they have these characteristics, traditional testing and validation techniques are “insufficient.””

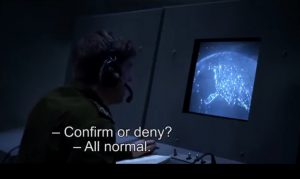

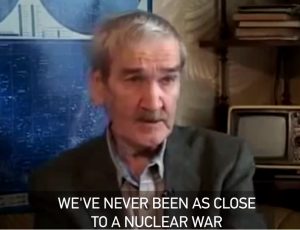

it happened before: world war 3 could have been started by malfunction of computer system

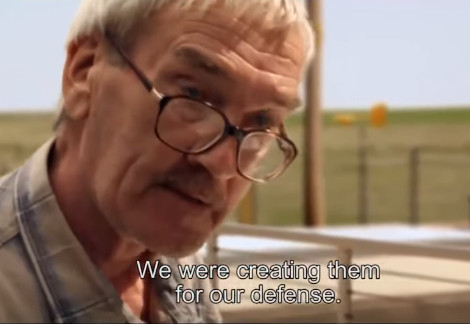

Stanislav_Petrov, an ex-Soviet soldier, effectively prevented the worst possible nuclear world war 3 self destruction of mankind, by reasoning for himself, instead of relying on the computer systems, that told him, that multiple nuclear missile warheads were incoming.

in the end it turned out – it was a malfunction of the computer system.

see the full documentary about Stanislav_Petrov here: https://www.youtube.com/watch?v=8TNdihbV5go

“On 26 September 1983, the computers in the Serpukhov-15 bunker outside Moscow, which housed the command center of the Soviet early warning satellite system, twice reported that U.S. intercontinental ballistic missiles were heading toward the Soviet Union. Stanislav Petrov, who was duty officer that night, suspected that the system was malfunctioning and managed to convince his superiors of the same thing. He argued that if the U.S. was going to attack pre-emptively it would do so with more than just five missiles and that it was best to wait for ground radar confirmation before launching a counter-attack.” (src: https://youtu.be/8TNdihbV5go )

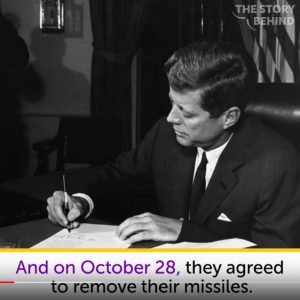

Cuba missile crisis (not related to computers… more towards… how many weapons are enough?)

https://en.wikipedia.org/wiki/Vasily_Arkhipov_(vice_admiral)

“off switch for AI weapons systems”

src: https://www.brookings.edu/blog/techtank/2019/11/15/assessing-ethical-ai-principles-in-defense/amp/

“The deadly drones are the likely “endpoint” of the current technological march towards lethal autonomous weapons systems (Laws), according to Professor Stuart Russell from the University of California at Berkeley.”

UK unmanned stealth drone

also very very scary:

The BAE Systems Taranis (also nicknamed “Raptor”) is a British demonstrator programme for unmanned combat aerial vehicle (UCAV) technology, under development primarily by the defence contractor BAE Systems Military Air & Information. The aircraft, which is named after the Celtic god of thunder Taranis, first flew in 2013.[2][3]

src: https://en.wikipedia.org/wiki/BAE_Systems_Taranis

open letter to UN to regulate AI weapons

“In January 2015, Stephen Hawking, Elon Musk, and dozens of artificial intelligence experts[1] signed an open letter on artificial intelligence calling for research on the societal impacts of AI.

The letter affirmed that society can reap great potential benefits from artificial intelligence, but called for concrete research on how to prevent certain potential “pitfalls”: artificial intelligence has the potential to eradicate disease and poverty, but researchers must not create something which cannot be controlled.[1]

The four-paragraph letter, titled “Research Priorities for Robust and Beneficial Artificial Intelligence: An Open Letter“, lays out detailed research priorities in an accompanying twelve-page document.”

the document:

https://futureoflife.org/data/documents/research_priorities.pdf

Signatories

Signatories include:

- physicist Stephen Hawking

- business magnate Elon Musk

- the co-founders of DeepMind, Vicarious,

- Google‘s director of research Peter Norvig,[1]

- Professor Stuart J. Russell of the University of California Berkeley,[9]

- and other AI experts, robot makers, programmers, and ethicists.[10]

- The original signatory count was over 150 people,[11] including academics from Cambridge, Oxford, Stanford, Harvard, and MIT.[12]

(src: https://en.wikipedia.org/wiki/Open_Letter_on_Artificial_Intelligence)

https://www.theverge.com/2019/10/25/20700698/microsoft-pentagon-contract-jedi-cloud-amazon-details

Google Exits Pentagon ‘JEDI’ Project After Employee Protests

Bots of War

The UN’s Convention on Conventional Weapons held its first meeting in November, 2017, and discussed the ramifications of autonomous weapons.

Links:

https://autonomousweapons.org/

liked this article?

- only together we can create a truly free world

- plz support dwaves to keep it up & running!

- (yes the info on the internet is (mostly) free but beer is still not free (still have to work on that))

- really really hate advertisement

- contribute: whenever a solution was found, blog about it for others to find!

- talk about, recommend & link to this blog and articles

- thanks to all who contribute!